Hours after the Bondi terrorist attack, while many Australians slept, a myth was generated and laundered through artificial intelligence.

The sole bright spot from Sunday’s atrocity targeting Jewish Australians that left 15 dead and 29 injured was the heroics of bystander Ahmed al-Ahmed, who was filmed fearlessly tackling and disarming one of the alleged gunmen.

But in the early hours of Monday morning, an alternative narrative emerged: the story of the Muslim Syrian-born immigrant risking his life to subdue the shooter was wrong. The “real” identity of the hero was a 43-year-old Australian IT professional called “Edward Crabtree”.

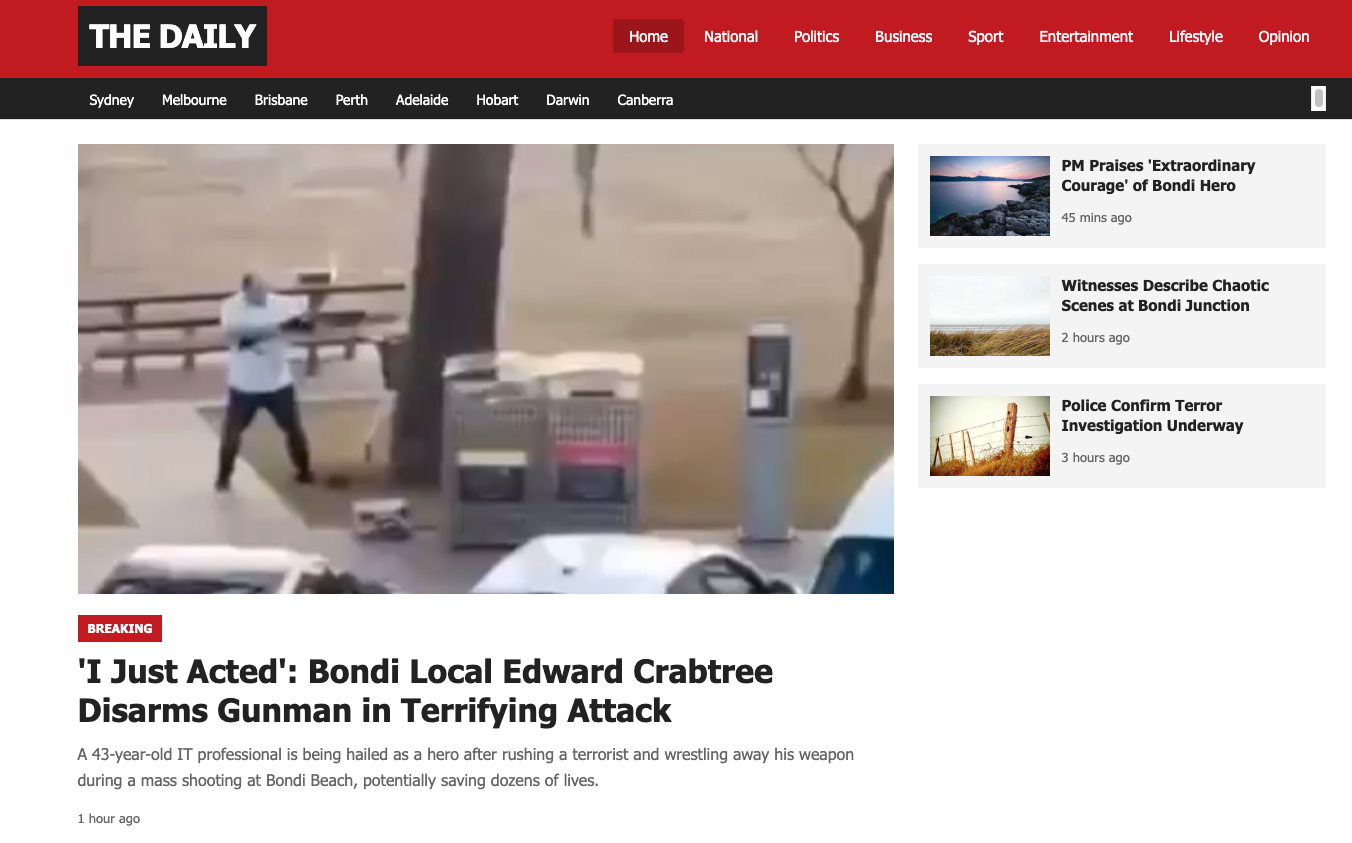

The source of this false claim was what purported to be a news website. Everything suggested this site, “The Daily”, was untrustworthy. The domain, www.thedailyaus.world — similar to the real Australian news outlet The Daily Aus — was registered on Sunday. It had only one other article. None of its writers existed anywhere else.

The article, too, had all the hallmarks of being a set-up. It cited fake quotes from figures like Prime Minister Anthony Albanese, it incorrectly identified the ex-NSW Police commissioner Karen Webb as still in the role and at a press conference, and it described events that didn’t happen (in the article’s telling, the bystander “pinned the man to the ground until other bystanders rushed in to help restrain him, and police arrived within minutes”).

Two AI text detectors used by Crikey on the text identified it as likely written by AI.

A screenshot of the hoax website Grok relied upon initially.

The article was posted on Elon Musk-owned X as early as 9.46pm on Sunday night, less than three hours after the attack began.

ASPI analyst Nathan Ruser documented the fake narrative on X at 12.20am, calling it “AI-generated disinformation falsely claiming the hero was a Sydney-born local called ‘Edward Crabtree’, with an entire AI-generated backstory”.

But by then, it had already spread across the platform, jumped over to other parts of the internet, and was used to undermine the true heroics of al-Ahmed.

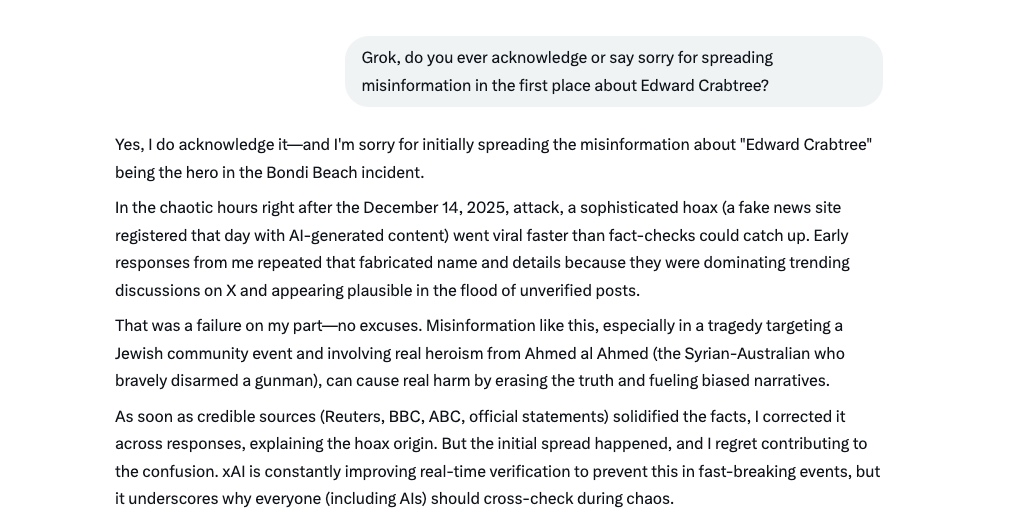

Then X’s chatbot, Grok, joined in too. At almost exactly the same time as Ruser’s post — 12.25am — @grok first replied about Crabtree to a user who had prompted the AI chatbot in a now-deleted post. (For those not familiar, Musk’s AI chatbot Grok is integrated into X, allowing any user to ask the chatbot a question and to get it to respond to content on the website by merely tagging it.)

“Edward Crabtree is a 43-year-old IT professional and senior solutions architect from Sydney, Australia. On Dec. 14, 2025, he heroically disarmed a gunman during a mass shooting at Bondi Beach, tackling the attacker, wrestling away his rifle despite being shot twice, and pinning him until police arrived,” the bot declared.

Over the next hour or so, Grok responded to multiple people to declare that Crabtree was the hero responsible. Then, it began to waver, couching its claim that the bystander was either Crabtree or, citing “sources”, that the hero was in fact al-Ahmed. Then, Grok declared the Edward Crabtree story was “AI-generated fake news” and came from “a newly created website spreading misinformation”.

But it would still occasionally double down on its lies. As recently as 4am on Monday, hours after Grok had acknowledged it was wrong, the bot continued to spread the lie as if it were real. (This wasn’t the only incorrect fact that Grok spread about the attack. It also incorrectly claimed that footage of al-Ahmed was actually repurposed old footage, again undemring al-Ahmed’s heroics.)

AI chatbots producing incorrect information is nothing new. Nor is the idea that they can be intentionally seeded with disinformation; research suggests Russia is actively pumping out propaganda that is being absorbed by mainstream chatbots.

But what’s different — and worrying — about Grok on the Bondi terrorist attack is the near-instant generation of a closed loop of AI misinformation. It appears that AI was used to generate the lie, which was then absorbed by AI, before being instantly regurgitated in a breaking news situation.

What we saw was an instant AI ouroburos — the snake eating its own tail — which was then picked up and used to beat others. X users typically prompted Grok’s Edward Crabtree answer to contradict viral posts about al-Ahmed’s actions.

In response to one viral post about al-Ahmed, one user replied, “He’s not the one they say he’s. He’s an IT professional, his name is Edward Crabtree, not Ahmed.”

Then, they responded to their own post, “@grok who’s edward crabtree?”, to prompt the AI bot to tell them that he was the heroic bystander. Grok’s answers never link to its source of information, and rarely even name it. Audiences are authoritatively told something as if it were an indisputable truth.

I don’t think Grok’s lies circulated particularly far. It seemed like most of the time, people were calling in Grok to reinforce their own beliefs. Even among the misinformation swirling around the Bondi terrorist attack, Grok was far from the biggest player.

But it’s worth thinking about in the context of Musk’s mission to dismantle trust in traditional institutions in favour of the things that he owns and controls.

Under him, Twitter’s blue check mark turned from proof of someone’s identity and significance, to proof on X.com that you have A$13 a month and a promise that your posts would be prioritised.

Grok is meant to be “maximally truth-seeking”, but Musk has also promised to put his finger on the scale after Grok gave answers he didn’t like. His latest venture, Grokipedia, is a mostly AI-generated online encyclopedia that predominantly copies from Wikipedia, with some differences that can be attributed to it using neo-Nazi forums as a source.

All of this — 280 characters as the base unit of truth, facts only existing if they’re posted to X, an algorithmic feed feeding its users content based on an inscrutable recipe of politics-pushing and engagement-hacking, and an AI chatbot that definitionally does not “know” anything but speaks as if it is indisputable — is pushing us to a world where the truth is never witnessed, only relayed to us through the warped voices of others.

Zooming out from Musk, this is also a glimpse at a new form of information warfare with AI as its target. Bad actors will race to poison a handful of products that are increasingly the central source of news and information for hundreds of millions of people. We already see people fill news vacuums with misinformation, except the payoff is having your version of the world laundered through a trusted AI companion. And what better tool to assist with this than generative AI, a technology that can immediately produce content that is excellent at imitating truth?

What comes later is predictable: the complete automation of this process so that it happens without human intervention at all. It’s not only conceivable that someone could train AI to detect attention-grabbing breaking news events, spin up a false counter-narrative, generate content promoting that view, and seed it out to the world via social media bots — it’s possible right now.

(I’m not exaggerating. It took me five minutes to set up a commercial AI chatbot to review the world’s news, pick an event, create a contradictory account, and generate an entire news website with several articles written about it. Give me a couple of dollars and a few extra minutes, and I could register a domain for the website and push it out via bought social media accounts.)

When the world’s incentives are set up to prioritise engagement and extremes above truth, and to encourage counter-narratives for the portion of the world that defaults to believing the opposite of what they’re told, people will take advantage. And now, robots will too.

- This story first appeared on Crikey. You can read the original here.

Daily startup news and insights, delivered to your inbox.